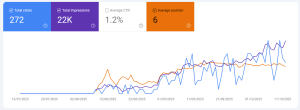

Short-form video is now the most-used content format by marketers, with 91% of businesses using video as a marketing tool and videos under 60 seconds getting 2.5x more engagement than long-form content. So if you’re running a home services business and not making short-form video, you’re leaving attention on the table.

The problem is that home repair videos are harder to make exciting on TikTok or Reels. In the fight for attention, your video of a door repair may lose to someone else doing a skateboard trick and falling on their face.

So I wanted to test something: can I create a viral-style video using AI that uses familiar FYP tropes, and then naturally leads into a home repair ad?

The Idea: Hijack Popular Tropes

If you’ve spent any time on TikTok or Instagram Reels, you’ve seen certain video formats that get massive engagement. The “falling over” videos. Unboxing reactions. Mukbangs. ASMR satisfying reels.

These formats work because people already recognise the pattern. They stop scrolling because the format is familiar, and they want to see how it plays out.

My idea was to take one of these viral tropes, and let it lead into something unexpected that ties back to a home repair scenario.

What I Made

The scenario: a Malaysian Chinese teenage girl, maybe 17 years old, is sitting on a bathroom countertop putting on lipstick on camera. She’s lip-syncing to a song, bobbing her head and shoulders to the beat. Her younger sister is hanging around in the same room.

Then the counter gives way. She drops out of frame. Her younger sister has a horrified look on her face.

The girl reappears and says “Don’t worry, Recommend.my will fix it.” (this is the website of my home services platform)

The whole thing is designed to feel like an organic, accidental moment that you’d see on someone’s FYP. The ad part only comes at the end.

You Can’t One-Shot This

If you’ve ever tried AI video generation, you might think: just describe the whole scene in one prompt and let the AI do the rest.

That doesn’t work.

Current AI video tools can generate maybe 5 to 10 seconds of video per generation. And even within those few seconds, the more things you ask to happen, the worse the output gets. If you try to describe “girl sits on counter, lip-syncs, counter breaks, she falls, sister reacts” in a single prompt, you’ll get a mess.

Instead, you need to think like an animator.

The Keyframe Approach

Traditional animation works by drawing “key frames” first. These are the important poses and moments. Then you fill in the frames between them to create smooth motion. This concept of keyframes is also used in CSS transitions and video editing.

The same principle applies to AI video. You need to plan out your key moments, generate a static image for each one, and then use AI video to generate the motion between those keyframes.

For my bathroom counter video, I mapped out five key frames:

- The setup – Girl sitting on the counter, putting on lipstick, looking at camera

- The lean – She leans back slightly, shifting her weight

- The collapse – The counter has broken, sister is shocked

- The recovery – Girl pops back up into frame, laughing and saying the ad message

Each of these is a distinct moment. And each one becomes a separate AI generation task.

The Tools I Used

For generating the key frame images, I used Nano Banana 2. It’s Google’s latest image generation model, built on their Gemini architecture. The main reason I chose it is that it’s fast, it maintains character consistency across multiple images (so the girl looks like the same person in each frame), and it handles Asian faces well. That last point matters a lot if you’re creating content for a Southeast Asian audience.

I’ve also previously used FLUX LoRAs on fal.ai to create AI images of myself. But for fictional characters, Nano Banana 2 was easier because I didn’t need to train a custom model.

For turning those key frame images into video clips, I used Kling AI. Kling is developed by Kuaishou, a Chinese short-video platform. It supports both text-to-video and image-to-video, and it’s become a solid choice for cost-effective AI video generation. I’ve talked about Kling before when I first experimented with AI video from selfies.

With Kling’s image-to-video feature, I uploaded each key frame image and wrote a short motion prompt describing what happens in that 5-second clip. For example, for key frame 1, my motion prompt was something like:

“Girl gently sways her shoulders side to side, bobbing her head rhythmically. She raises a lipstick to her lips. The camera stays still. Handheld phone camera feel.”

You don’t describe the whole scene again in the motion prompt. The image already shows the scene. The prompt just tells the AI what should move and how.

Stitching It Together

After generating the video clips for each key frame transition, I ended up with five or six short clips. Some of them took two or three re-rolls before I got something usable. Faces can distort if there’s too much camera movement, so I kept most of my prompts focused on small, subtle motions.

Then I brought everything into CapCut for final editing. I use CapCut for all my short-form video editing anyway, so this was familiar territory.

In the editor, I trimmed the clips, matched the pacing to a music track, and added the “ad card” at the end with the home repair call-to-action. The total video came out to about 22 seconds (below)

What I Learned

The keyframe method is essential. Don’t try to describe a complex multi-event scene in a single AI video prompt. Break it down into key moments, generate images for each, then generate short videos between them. This is the same workflow that professional AI video creators use for short films and ads.

Character consistency is still tricky. Even with Nano Banana 2’s consistency features, the character’s face can shift slightly between frames. This is less obvious in a fast-cut, phone-shot style video (which is actually what you want for FYP content). But if you’re going for a polished look, expect to re-generate a lot.

Keep motion prompts simple. The less movement you ask for in each clip, the better the result. Small head tilts, blinking, subtle hand movements. The moment you ask for a character to do something dramatic like fall off a counter, you need to be very specific about the camera angle, and even then it might take several attempts.

The trope does the work. The reason this concept works as an ad is because the viewer is hooked by a familiar format before they even know it’s an ad. By the time the “counter collapses” moment happens, they’re already invested. The ad card at the end is just a punchline. That’s much more effective than starting with “Are you looking for home repair services?”

This whole thing cost almost nothing. Between Nano Banana 2 (free tier via Google Gemini) and Kling AI (credit-based, a few dollars), the total cost was under $10 for the final video. Compare that to hiring actors, a videographer, and renting a location. Even for a scrappy social media ad, you’d be looking at hundreds of dollars minimum. However, if you end up running multiple generations to get the video looking just right, then your costs may increase proportionately.

Why This Matters for SMEs

If you run a home services business, a renovation company, or even a property management firm, this approach gives you a way to create scroll-stopping content without a production crew.

The workflow is:

- Come up with a viral trope that can naturally tie into your service

- Plan your key frames (I recommend 4 to 6 frames for a 15-20 second video)

- Generate key frame images using an AI image tool like Nano Banana 2

- Generate video clips between key frames using Kling AI or similar

- Edit everything together in CapCut or your preferred editor

- Add your CTA at the end

For context, short-form video ad spending is projected to reach $111 billion in 2025. Whether you’re posting organically or running it as a paid ad, this kind of content grabs attention in a way that a standard “before and after” reel simply doesn’t.

I’ve been building AI-powered content workflows for a while now, from SEO article automation to case study generation from job site photos. Message me if you need help!

WATCH BELOW: I explain using keyframes to generate videos

Leave a Reply